EDGECORTIX ANNOUNCES SAKURA AI CO-PROCESSOR DELIVERING INDUSTRY-LEADING LOW-LATENCY AND ENERGY-EFFICIENCY

EdgeCortix® Inc., the innovative fabless semiconductor design company with a software-first approach, focused on delivering class-leading compute efficiency and latency for edge artificial intelligence (AI) inference; unveiled the architecture, performance metrics, and delivery timing for its new industry-leading, energy-efficient, AI inference co-processor.

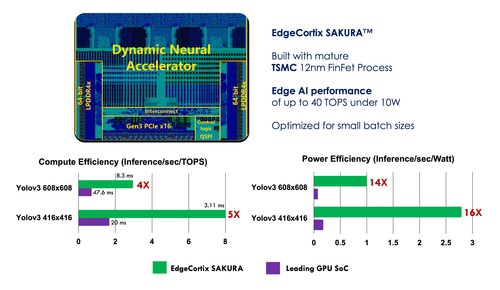

Fig: EdgeCortix SAKURA chip die photo along with AI inference efficiency comparison to the leading GPU for edge use-cases, on the Yolov3 object detection benchmark. Current leading GPU SoC at 32 TOPS and 30W TDP vs EdgeCortix SAKURA at 40 TOPS and 10W TDP. SAKURA delivers over 10X power-efficiency advantage. All data normalized to the baseline of Yolov3 608×608 and with batch size 1.

EdgeCortix officially introduced its flagship energy-efficient AI co-processor (accelerator), branded SAKURA™. Designed entirely in their Tokyo-based development center, the company announced that SAKURA is produced by TSMC on the popular 12nm FinFET technology and will be available as low-power PCI-E based development boards to participating companies of the EdgeCortix Early Access Program (EAP) in July of 2022.

“SAKURA is revolutionary from both a technical and competitive perspective, delivering well over 10X performance/watt advantage compared to current AI inference solutions based on traditional graphics processing units (GPUs), especially for real-time edge applications.”, said Sakyasingha Dasgupta, CEO, and Founder of EdgeCortix, “After validating our AI processor architecture design with multiple field-programmable gate array (FPGA) customers in production, we designed SAKURA as a co-processor that can be plugged in alongside a host processor in nearly all existing systems to significantly accelerate AI inference. Using our patented runtime-reconfigurable interconnect technology, SAKURA is inherently more flexible than traditional processors and can achieve near-optimal compute utilization in contrast to most AI processors that have been developed over the last 40+ years.”

SAKURA is powered by a 40 trillion operations per second (TOPS), single-core Dynamic Neural Accelerator® (DNA) Intellectual Property (IP), which is EdgeCortix’s proprietary neural processing engine with a built-in reconfigurable data path connecting all compute engines. DNA enables the new SAKURA AI co-processor to run multiple deep neural network models together, with ultra-low latency, while preserving exceptional TOPS utilization. This unique attribute is key to enhancing the processing speed, energy efficiency, and longevity of the system-on-chip, providing exceptional total cost of ownership benefits. The DNA IP is specifically optimized for inference with streaming and high-resolution data.

Key industrial segments where the SAKURA performance profile is ideally suited to include transportation / autonomous vehicles, defense, security, 5G communications, augmented & virtual reality, smart manufacturing, smart cities, smart retail and robotics, and all markets that require low power, low latency AI inference.

The company announced that SAKURA will also be available to customers for purchase in multiple hardware form factors, and the underlying IP can be licensed in conjunction with EdgeCortix’s software stack for customers designing their proprietary semiconductors.

Key SAKURA features include:

- Up to 40 TOPS (single-chip version) and 200 TOPS (multi-chip version).

- PCI-e Device TDP @ 10W-15W.

- Typical model power consumption ~5W.

- 2×64 LPDDR4x – 16 GB.

- PCIe Gen 3 up to 16 GB/s bandwidth.

- Two form factors – Dual M.2 and Low-profile PCIe.